Using Apple HLS for video streaming in apps

Overview – Why Use Apple HLS

Overview – Why Use Apple HLS

All of the apps JoSara MeDia currently has in the Apple app store (except for the latest one shown in screenshot to the right, which prompted this article on Apple HLS) are self-contained; all of the media (video, audio, photos, maps, etc.) are embedded in the app. This means that if a user is on a plane or somewhere that they have no decent network connection that the apps will work fine, with no parts saying “you can only view this with an internet connection.”

This strategy works very well except for two main problems:

- the apps are large, frequently over the size limit Apple designates as the maximum for downloading over cellular. I have no data that says this would limit downloads, but it seems obvious;

- if we want to migrate apps to the AppleTV platform (and of course we do!), we have to have a much more cloud centric approach, as Apple TV has limited storage space.

These two issues prompted me to use the release of our Quebec City app as a testing ground for moving the videos included in the app (and the largest space consuming media in the app) into an on-demand “cloud” storage system. I determined the best solution for this is to use Apple HLS (HTTP Live Streaming) solution.

There are still many things I am figuring out about using HLS, and I would welcome comments on this strategy.

What is Apple HLS and why would you use it

For most apps, there is no way to predict what bandwidth your users will have when they click on a video inside your app. And there is an “instant gratification” requirement (or near instant) that must be fulfilled when a user clicks on the play button.

Have you ever started a video, have it show as grainy or lower quality, and then get more defined as the video plays? This is an example of using HLS with variant playlists (other protocols do this as well).

Simply put, with Apple HLS a video is segmented into several time segment files (denoted by the file extension .ts) which are included in a playlist file (denoted by the file extension .m3u8) which describes the video and the segments. The playlist is a human readable file that can be edited if needed (and I determined I needed to, see below).

Added on to this is a “variant playlist” which is a master playlist file in the same format that points to other playlist files. The concept behind the variant playlist is to have videos of multiple resolutions and sizes but with the same time segments (this should be prefaced with “I think”, and comments appreciated). When a video player starts playing a video described by a variant playlist, it starts with the lowest bandwidth utilization playlist (which is by definition smaller in size and therefore should download and start to play the quickest, thus satisfying that most human need, instant gratification), determines through a handshake what bandwidth and resolution the device playing the video can handle, and ratchets up to the best playlist in the variant playlist to continue playing. I am assuming (by observation) that it only will ratchet up to a higher resolution playlist at the time segment breaks (which is also why I think the segments all have to be the same length).

Options for building your videos and playlists

There are two links that provide standards for videos for Apple iOS devices and Apple TVs (links below):

- standards for iPhone and iPad (look at the section on creating variant playlists)

- standard for Apple TVs

These standards do overlap a bit, but, as you would expect, the Apple TV standards have higher resolution because of an always connected, higher bandwidth (minimum wifi) connectivity than one can expect with an iPhone or iPad.

To support iPhones, iPad and Apple TVs, the best strategy would be to have 3 or 4 streams:

- a low bandwidth/low resolution stream for devices on cellular connections

- a mid-range solution for iPhones and iPad on WiFi

- a hi bandwidth/hi resolution stream for always connected devices like Apple TVs

Thus the steps become:

- Convert your native video streams into these multiple resolutions;

- Build segmented files with playlists of each resolution;

- Build a variant playlist that points to each of the single playlists;

- Deploy

- Make sure that when you deploy, the variant playlist and files are on a content distribution network which will get them cached around the world for faster deployment (only important if you are assuming worldwide customers…which you should).

- Put the video tags in your apps.

Converting your native video streams:

My videos are in several shapes and resolutions, since they come from what ever device I have on my at the time. They are usually from an Olympus TG-1 (which has been with me through the Grand Canyon, in Hawaii, in cenotes in the Yucatan and now in Quebec City) which is my indestructible image and video default, or some kind of iOS device. Both are set to shoot in the highest quality possible. This makes the native videos very large (and the apps that they are embedded in larger still).

There are several tools to convert the videos. These are the ones I’ve looked into:

- Quicktime – the current version of Quicktime is mostly useless in these endeavors. But Quicktime 7 does have all of the settings required in the standards links from the first paragraph in this section. One could go through and set those exactly as specified, and get video output, then put them through the Apple mediasegmenter command line tool. If you do not have an Amazon Web Services (AWS) account, this would most likely be the way to proceed…as long as this version of Quicktime is supported. To get to these options go to File -> Export, and select “Movie to MPEG-4” and click on the “Options” button. All of the parameters that are suggested in the standards for iPhone and iPad are available here for tweaking and tuning. Quicktime 7 can still be downloaded from Apple at this link.

- Amazon Web Service (AWS) Elastic Transcoder – for those of us that are lazy like me, AWS offers “Elastic Transcoder” which provides present HLS options for 400K, 1M and 2M. This encodings are done through batch jobs, with detailed output selections. There are options for HLS3 and HLS4. HLS4 requires a split between audio and video. This may be a later standard, but I could not get the inputs and outputs correct…therefore, I went with the HLSv3 standards. The setup requires:

- Setting up S3 repositories that hold the original videos, and destination repositories for the transcodes files and thumbnails (if that option is selected)

- Creating a “Pipeline” that uses these S3 repositories

- Setting up an Elastic Transcoder “job” (as an old programmer, I’m hoping this is a reflection on the batch job status of old!) in this pipeline, where you tell it what kind of transcodes that should come out of the job, and what kind of playlist.

- iMovie – iMovie has several output presets, but I did not find a way to easily adapt them to the settings in the standards.

Building segmented files

Once you have your videos converted, the next step is to build the segmented files from these videos plus the playlists that contains the metadata and location of the segmented files (these are the files that end with .ts). There may be other tools, but there are only two that I have found.

- Apple dev tools – Apple HLS Tools require an Apple developer account. To find them, go to this Apple developer page, and scroll down to the “Download” section on the right hand side (requires developer login). The command to create streams from “non-live” videos is the mediafilesegmenter. To see the options, either use the man pages (type “man mediafilesegmenter” at the command line) or just type the command for a summary of the options. There are options for the time length of the segments, creation of a file for variant playlists, encryption options and others. I found through much trial and error that using the “file base” option (-f) to put the files in a particular folder, and omitting the “base URL” option (-b) (which I didn’t at first, not realizing that the variant playlist which points at the individual stream playlists can point to file folders to make it neat) worked best. In the end, I used this command to create 10 second segments of my original (not the encoded) files, to create a hi-resolution/hi bandwidth option.

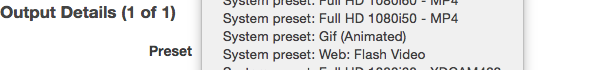

- Amazon Web Service (AWS) Elastic Transcoder – the Elastic Transcoder not only converts/encodes the videos, but will also build the segments (and the variant playlists). As you can see from the prior screenshot, there are system presets for HLSV3 and v4 (again, I used V3) for 400K, 1M and 2M. The job created in Elastic Transcoder will build the segmented files in designated folders with designated naming prefixes, all with the same time segment. I have, however, seen some variance of quality in using Elastic Transcoder…or at least a disagreement between the Apple HLS Tools validator and the directives given in the Elastic Transcoder jobs. More on that in the results and errors section.

Building variant playlists

Finally, you need to build a variant playlist, which is a playlist that points to all of the other playlists of different resolution/bandwidth option time segments.

- Apple dev tools – the variantplaylistcreator cmd-line command will take output files from the mediafilesegmenter cmd-line command and build a variant playlist.

- Amazon Web Service (AWS) Elastic Transcoder – are you detecting a pattern here? As part of the Elastic Transcoder jobs, the end result is to specify either an HLSv3 or HLSv4 variant playlist. I selected v3, as the v4 selection requires separate audio and video streams and I could never quite get those to work.

- Manual – the playlist and variant playlist files are human readable and editable.

Currently, I am using a combination of Elastic Transcoder and manual editing. I take the variant playlist that comes out of Elastic Transcoder (which contains 400K, 1M and 2M playlists), then edit it to add the playlist I created using the mediafilesegmenter, the higher-res version. This gives a final variant playlist with four options that straddle the iOS device requirement list and the Apple TV requirement list.

Putting the videos into your apps

Most of my apps are HTML5 using a standard called HPUB. This is to take advantage of multiple platforms, as HPUB files can be converted with a bit of work to ePub files for enhanced eBooks.

To use the videos in HTML5 is straightforward – just use the

Apple HLS Results and Errors

In the end, the videos work, and seem to work for users around the world, with low or high bandwidth, as expected. I’m sure there are things that can be done to make them better.

I’ve used the mediastreamvalidator command from the Apple Developer tools pretty extensively. It doesn’t like some of the things about the AWS Elastic Transcoder generated files, but it is valuable in pointing out others.

Here are some changes I’ve made based on the validator, and other feedback:

Error: Illegal MIME type – this one took me a bit. The m3u8 files generated by AWS are fine, but files such as those generated from the mediastreamsegmenter tool do not pass this check. They get tagged by the error “–> Detail: MIME type: application/octet-stream”. In AWS S3 there is a drop down list of MIME types in the “Metadata” section, but none of the recommended Apple MIME types are there. The files generated by AWS have the MIME type “application/x-mpegURL”, which is one of the recommended ones. Since it is not a selection in the drop down, it took me a while to determine that you can actually just manually enter the MIME type into the field, even if it is not in the drop down list. Doh!

Time segment issues – whether utilizing AWS Elastic Transcoder or the mediafilesegmenter cmd line tool, I’ve always used 10 second segments. Unfortunately, either Elastic Transcoder isn’t exact or the mediastreamvalidator tool does not agree with Transcoder’s output. Here’s an example as a snip from mediastreamvalidator’s output:

Error: Different target durations detected

–> Detail: Target duration: 10 vs Target duration: 13

–> Source: BikeRide/BikeRideHI.m3u8

–> Compare: BikeRide/hls_1m.m3u8

–> Detail: Target duration: 10 vs Target duration: 13

–> Source: BikeRide/BikeRideHI.m3u8

–> Compare: BikeRide/hls_400k.m3u8

–> Detail: Target duration: 10 vs Target duration: 13

–> Source: BikeRide/BikeRideHI.m3u8

–> Compare: BikeRide/hls_2m.m3u8

This is basically saying the the “HI” version of the playlist (which was created using Apple’s mediafilesegmenter cmd-line tool) is ten seconds, but the AWS Elastic Transcoder created playlists (the three that start with “hls”) are 13…when the job to create them was set for 10 seconds. I am still trying to figure this one out, so any pointers would be appreciated.

File permissions – when hosting the playlist and time segment files on an AWS S3 bucket, uploading the files causes the permissions for the files always need to be reset to be readable (either “Public” or set up correctly for a secure file stream. This seems obvious, but working through the issues that the validator brought up had me uploading files multiple times, and this always came back to bite me as an error in the validator.

HLS v3 vs. v4 – except for the fact that you have to have separate audio and video streams in v4, I’m still clueless as to when and why you would use one version over the other. It would seem that a single audio stream would be needed for really really low bandwidth. But separating out the video and audio streams is quite a bit of extra work (I would be thrilled if someone would leave a comment about a simple tool to do this). I can see some advantage in separate steams, in that it would allow the client to choose a better video stream with lower quality audio based on its own configuration. More to learn here for sure.

Client usage unknowns – now that the videos work, how do I know which variant is being played? It would be good to know if all four variants were being utilized, and under what circumstances they are being consumed (particular devices? bandwidths?). There is some tracking on AWS which I can potentially use to determine this.

I hope this helps anyone else working their way through using Apple’s HTTP Live Streaming. Any and all comments appreciated. Thanks to “tidbits” from Apple and the Apple forums for his assistance as I work my way through this.

To see the app that this is used on, click on the App Store logo. I’d appreciate feedback especially from those not in the US as to their perceptions of (a)how long it takes the videos to start and (b)how long it take the quality to ramp up.

I just recently set up an automated workflow for uploading audio/video to s3, which will trigger lambda functions to use Elastic Transcoder to transcode and then upload hls files to a separate bucket, which then uses Cloudfront to serve up content to an iOS app. It seems to be working ok so far, but I’m still getting errors on the streams using Apples stream validator tool. For audio only, I’m getting `Measured peak bitrate compared to master playlist declared value exceeds error tolerance`, and video I’m getting the former and `Different target durations detected`. Did you ever find out why the Elastic Transcoder seems to provide this “sloppiness”? Some of the bitrates are ridiculously far off (`Detail: Measured: 63.31 kb/s, Master playlist: 5698.00 kb/s, Error: 8900.85%`)

I never found out why, and I asked. Next time there is an AWS webinar on the subject, I will ask again. I do not find any issues with AWS transcoding in the apps I use it in…but Apple’s validator, as you said, certainly disagrees!

Hey thanks for this, super helpful to guide me thru things. For the HLSv4 – use the HLS presets labelled _video_ and _audio_ and create the master playlist using at least one video output and one audio output to ensure you get both the video and audio out put.